Fact or FUD: The Misrepresented Danger of Tesla Autopilot

Written By: Dheepan Ramanan

Tesla has been in the news a lot since our previous piece on autonomous driving. This is part one of two covering Tesla developments, which covers the recent crash in Texas. Part 2 will cover Biden’s climate plan, Q1 earnings, and potential macroeconomic tailwinds.

Are Tesla’s Safe?

Last week, two men were killed after their Tesla crashed into a tree at high speed and caught fire. Notably, neither of the passengers were found in the driver’s seat when first-responders arrived at the scene. With these limited facts in hand, many media outlets conjectured that Tesla’s Autopilot was involved and potentially at fault for the accident. Currently, the National Highway Traffic Safety Administration (NHTSA) is investigating 23 accidents involving Autopilot. With this media firestorm the average person could be excused for feeling FUD (fear, uncertainty, and doubt) around the company. Many are concerned about Tesla’s Autopilot technology and more generally if any battery-powered electric vehicle is safe. A sober look at the data and the technologies involved debunk these fears rather quickly. In fact, we see Tesla’s Autopilot safety steadily improving over time.

Electric Vehicle Fires Are Less Likely than Conventional Gasoline Cars

Tesla publishes vehicle safety records for crashes on a quarterly basis and fire related incidents on an annual basis. Although there is risk of fires from electric batteries in high impact accidents, from published data this risk is actually significantly less likely than with gasoline cars. Tesla’s data has stated fires with it’s electric vehicles occur once every 205 million miles driven. This is compared to once every 19 million miles for conventional gasoline vehicles. These fires include incidents of arson and structure fires which are unrelated to crash incidents.

Did Tesla Autopilot Cause this Accident?

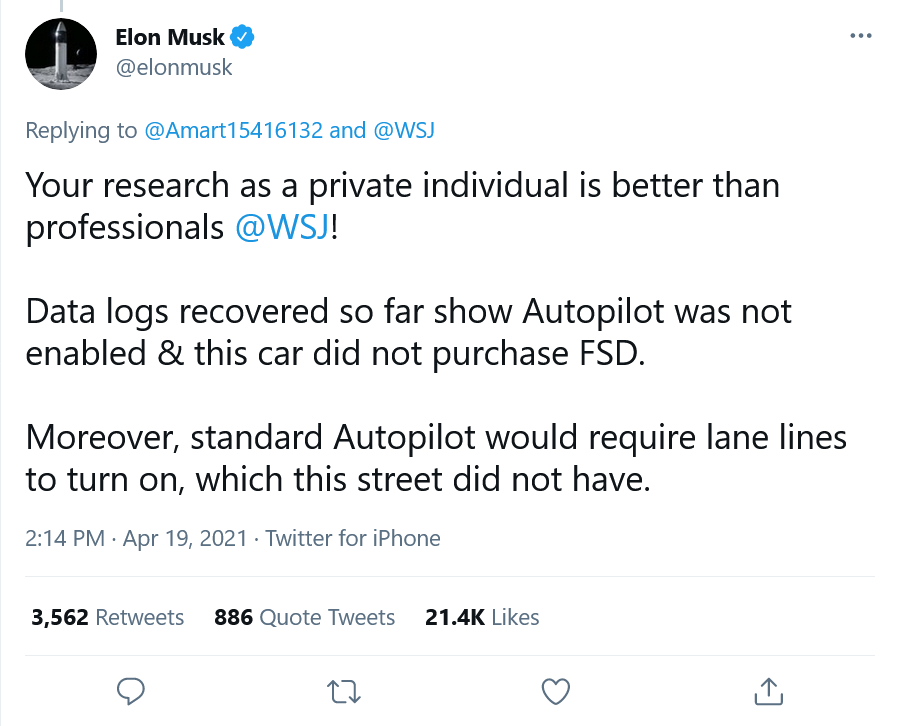

Tesla’s Autopilot system is a driver assistance feature which allows Tesla’s to “steer, accelerate and brake automatically within its lane”. Tesla’s manual mandates that users maintain their attention on the road and their hands on the wheel while Autopilot is engaged. Although there have been crashes while Tesla’s are engaged in Autopilot, this accident was not one of them.

It’s possible that this accident involved the driver enabling cruise control and accidentally hitting the accelerator instead of the brake. The user may have wanted to deliberately misuse Autopilot, but through user error they never actually enabled it. Given the safety mechanisms built into Autopilot this is the more likely scenario.

This is a much less sensationalist outcome, it is not similar to the Toyota recall in 2010. The media at the time played up the idea that the electronic cruise control in the cars were causing unintended acceleration. After the NHTSA investigation, which included NASA engineers reviewing over 280,000 lines of code, they concluded that user error contributed to most of the issues. People were accidentally hitting the accelerator instead of the brake.

Technological skepticism is natural and it’s the media’s job to be adversarial. However, the phrase “if it bleeds, it leads” exists for good reason. As an investor it’s important to be aware of this bias. News coverage focuses on exceptional human interest stories, which are uncommon in reality. Fear and anger are the two strongest emotions that drive web traffic. This bias creates a self-perpetuating loop that distorts the statistical truth. For example, it’s well documented that people’s perception of crime is far greater than its occurrence in reality.

Although many other vehicles have similar features to Autopilot, Tesla is a much more prominent brand, and this leads to more media attention. After Tesla refuted the claim that Autopilot was involved in the crash the media critique changed.

Spinning the Narrative

Although Autopilot was not involved in the crash, media sources such as the New York Times and Consumer Reports changed the narrative to one around the irresponsibility of Tesla. Tesla’s technology may not have been directly at fault, but their “marketing” or lack of driver safety features for Autopilot are indirectly contributing to more accidents.

Consumer Report’s (CR) reporting was by far the most absurd. To prove that it was possible to enable Autopilot and be in the passenger seat, Consumer Reports did the following:

Fisher engaged Autopilot while the car was in motion on the track, then set the speed dial (on the right spoke of the steering wheel) to 0, which brought the car to a complete stop. Fisher next placed a small, weighted chain on the steering wheel, to simulate the weight of a driver’s hand, and slid over into the front passenger seat without opening any of the vehicle’s doors, because that would disengage Autopilot. Using the same steering wheel dial, which controls multiple functions in addition to Autopilot’s speed, Fisher reached over and was able to accelerate the vehicle from a full stop. He stopped the vehicle by dialing the speed back down to zero.

In juxtaposition, to the hacky solution CR built to achieve the feat, their headline read as follows “CR Engineers Show a Tesla Will Drive With No One in the Driver's Seat”. Obviously, no real customer would replicate CR’s experiment, but the headlines spawned by this piece glossed over the ridiculous lengths CR went to create this scenario.

CR later in the piece criticized Tesla for not including driver monitoring through cameras as an additional Autopilot safety mechanism. Similarly, CR in October of 2020 found Tesla’s to be the best driver assistance system (when it came to driving tasks) but criticized the safety features. Although this is a perfectly valid critique, CR nestled this criticism in click-bait content reinforcing the preexisting media cycle around the Texas crash.

More nebulous is the critique by other outlets and media members on Twitter regarding Tesla's marketing, which they claim creates a false perception among consumers.

Others point to videos of users improperly using Autopilot and the Full Self-Driving (FSD) beta as proof that Tesla’s branding leads to improper usage. All of this evidence seems anecdotal at best.

Tesla simply has the most vehicles on the road with these technologies. Moreover, users who have blatantly misused the technology have gotten banned from the FSD beta. To critics' credit, an IIHS study found that more Tesla users thought it was appropriate to take their hands off the wheel with Autopilot than other brands. This scenario is explicitly covered by Tesla’s existing safety technologies. Perhaps what is happening here (other than marketing) is that after users have used the technology, they feel too secure about its safety (after all CR themselves found Tesla to have the best driver assistance). It is unclear from the results of the study if the Tesla drivers used Autopilot more often than customers with other driver assistance technologies.

Without true comparative data on accidents, this critique feels patronizing to consumers. In effect Neal Boudette and others predict consumers may be willing to risk their lives based on pure marketing hype.

Tesla’s Autopilot is 10x Safer than the Average Car

Contrary to many media stories, Tesla’s Autopilot safety has actually improved over time. I collected the data points from Tesla’s quarterly safety reports and computed a yearly moving average of the miles driven per accident (in order to dampen quarterly volatility).

Even as Tesla’s fleet has increased in size to over 1.5 million vehicles today the likelihood of getting into an accident with Autopilot enabled has decreased. The rolling average of miles per crash increased from around 3.1 million miles in Q2 2019 to 4.2 million miles driven in Q1 2021. Tesla’s methodology includes all accidents when Autopilot was deactivated within 5 seconds of the accident as well as accidents where the Tesla was not at fault.

Interestingly, the other two reported metrics without Autopilot enabled show no substantial gain in safety over time. Both these metrics are significantly higher than the safety of overall vehicles, which stayed static at roughly 480,000 miles driven per crash. This one of the chief advantages of over-the-air-updates, the underlying software in Autopilot can be improved and iterated on. It’s likely that today’s Tesla’s are safer than ones released last year purely through software updates.

Concluding Thoughts

It’s in Tesla’s best interest to be the safest vehicle on the market. Although Tesla is now a 700 billion market cap company, this success was not overnight. The brand’s reputation was hard fought over the preceding years when they had to convince customers to take the leap to electric. Developing a reputation for dangerous cars would have literally bankrupted the company in earlier years and devastate financial results today. The credit Tesla has with customers would evaporate if they let safety standards drop.

Tesla’s released safety data points to the data feedback loop in action. New, smarter Autopilot models are deployed regularly making the fleet safer over time. Without comparable accident data by other manufacturers, it’s hard to conclude that Tesla’s system is less safe than any other driver assistance system. Independent of other manufacturers, a Tesla vehicle with Autopilot is almost 10 times less likely to be involved in an accident. The notion that there is a spate of Autopilot driven accidents is media driven hyperbole.